☆ Yσɠƚԋσʂ ☆

- 2.99K Posts

- 3.26K Comments

6·20 hours ago

6·20 hours agoRight, which really suggests that email is not the right medium if you want genuine privacy.

5·1 day ago

5·1 day agoRight, understanding what your threat model is important. Then you can make a conscious choice regarding the trade offs of using a particular service, and you understand what your risks are.

271·1 day ago

271·1 day agoMetadata tracking should be very concerning to anyone who cares about privacy because it inherently builds a social graph. The server operators, or anyone who gets that data, can see a map of who is talking to whom. The content is secure, but the connections are not.

Being able to map out a network of relations is incredibly valuable. An intelligence agency can take the map of connections and overlay it with all the other data they vacuum up from other sources, such as location data, purchase histories, social media activity. If you become a “person of interest” for any reason, they instantly have your entire social circle mapped out.

Worse, the act of seeking out encrypted communication is itself a red flag. It’s a perfect filter: “Show me everyone paranoid enough to use crypto.” You’re basically raising your hand. So, in a twisted way, tools for private conversations that share their metadata with third parties, are perfect machines for mapping associations and identifying targets such as political dissidents.

9·2 days ago

9·2 days agothe actual pirates of the Caribbean

5·2 days ago

5·2 days agoUnknown at this time.

6·2 days ago

6·2 days agoOpen sourcing these thing would definitely be the right way to go, and you’re absolutely right that it’s a general solver that would be useful in any scenario where you have a system that requires dynamic allocation.

122·3 days ago

122·3 days agolive footage from Estonia

5·3 days ago

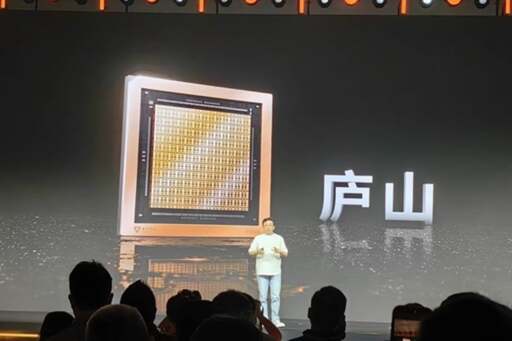

5·3 days agoYeah for sure, I do think it’s only a matter of time before people figure out a new substrate. It’s really just a matter of allocating time and resources to the task, and that’s where state level planning comes in.

3·3 days ago

3·3 days agoit’s a pretty big case right now

5·3 days ago

5·3 days agomfbc told them so, liberals don’t have thoughts of their own

5·3 days ago

5·3 days agoit’s like the most pretentious thing you could do online

142·3 days ago

142·3 days agolmfao imagine trotting out mbfc like it means anything you terminal online lib 🤣

101·4 days ago

101·4 days agoIt’s like saying silicon chips being orders of magnitude faster than vacuum tubes sounds too good to be true. Different substrate will have fundamentally different properties from silicon.

it’s so adorable how you’re trying to be edgy here, one day you’re going to grow and cringe at the memory of yourself, or maybe you won’t and just keep going through life like this

A comeback truly worthy of an edgy 13 year old. One day you’ll even grow pubes.

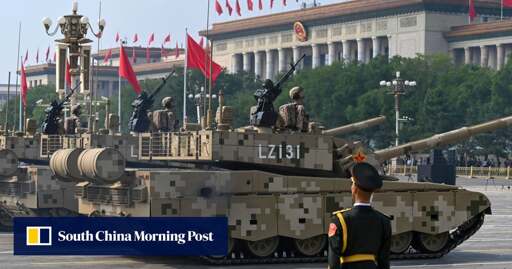

Same, there are a lot of tipping point that could end up being unlocked in the near future. The effects could be utterly disastrous. Interestingly, places like Cuba or DPRK might be best prepared because they’re largely self sufficient. China and Russia are likely in a good position as well because they have end to end domestic supply chains. The countries that will be most affected are the ones that leaned in heavily into globalization and allowed their industries to become gutted. As supply chain disruptions due to climate disasters start becoming a common place occurrence, all these fragile just-in-time supply chains are going to crumble.